In 2026, the term “AI assistant” has become increasingly broad.

A few years ago, it mostly referred to a conversational chatbot. You ask a question, the chatbot replies, and the interaction ends when the chat ends. This model is still important, but it no longer describes the full landscape.

AI assistants now appear in browsers, desktops, terminals, IDEs, enterprise workspaces and automation platforms. Some help us write and think. Some help us organise knowledge. Some can access files, run commands, edit code, operate applications or connect to external systems.

A useful way to understand this space is to look at four dimensions: where the assistant runs, how autonomous it is, how persistent it is, and what it can access.

A framework for understanding AI assistants

The first dimension is the surface. This refers to where the assistant lives. Some assistants are browser-based, such as ChatGPT and Google NotebookLM. Some are desktop-based, with access to local files and applications, such as Claude Cowork. Some live in the terminal, such as Claude Code. Others run on servers and respond to events in the background.

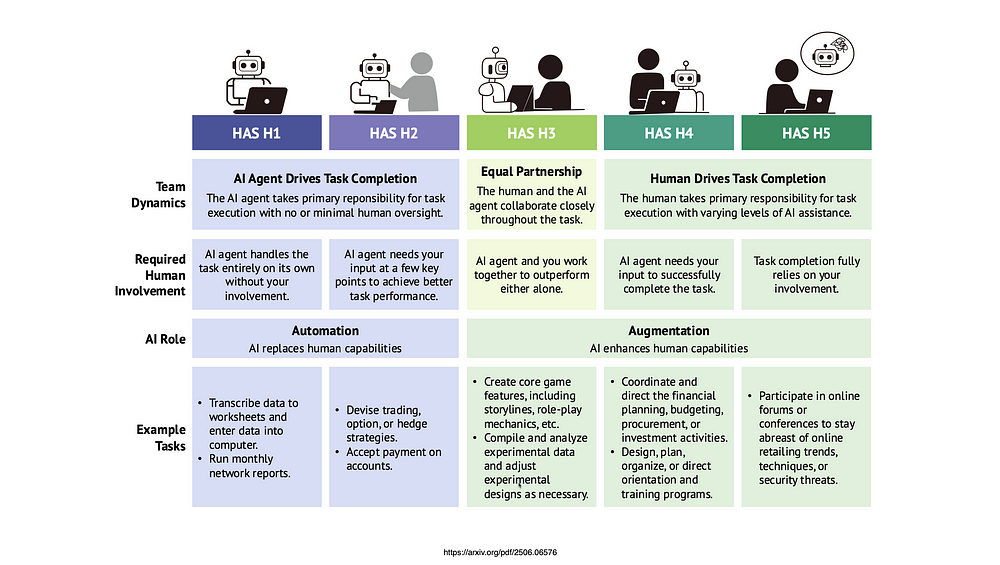

The second dimension is autonomy. A basic assistant waits for instructions and replies. A more advanced assistant can use tools, plan steps and execute a workflow. A highly autonomous assistant can monitor events and take action with less direct prompting.

The third dimension is persistence. Some assistants are session-based. Once the chat ends, the working context is mostly gone. Others are project-based, where context persists within a notebook, workspace or repository. The more interesting category is the persistent assistant that continues running across time.

The fourth dimension is scope of access. An assistant that only sees the current prompt is very different from one that can access documents, email, calendar, source code, local files or enterprise systems. The more access an assistant has, the more useful it becomes. It also becomes more risky.

| Dimension | What it means | Examples |

|---|---|---|

| Surface | Where the assistant lives | Browser, desktop, terminal, IDE, server, mobile |

| Autonomy | How much it can do without step-by-step instruction | Answer, assist, act, monitor |

| Persistence | Whether it continues across time | Session, project, long-running, continuous |

| Scope of access | What it can see or control | Web, files, apps, email, calendar, enterprise systems |

This gives us a simple way to classify AI assistants in 2026. They mainly come in two variants: interactive, session-based assistants, and autonomous, persistent assistants.

Interactive, session-based assistants

The first variant is the interactive assistant.

This is the most common form today. The user starts a session, gives instructions, reviews the response, and continues the interaction. The assistant may be very capable, but the user is still actively in the loop.

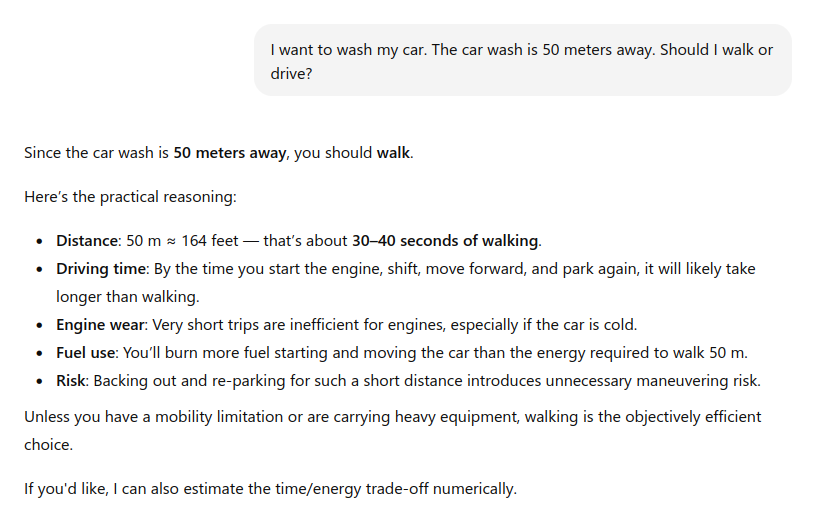

Browser-based assistants are the most familiar example. ChatGPT, Claude, Gemini and NotebookLM fall broadly into this category, although they serve different purposes. ChatGPT is general purpose. NotebookLM is more focused on working with sources and organising knowledge. Google describes NotebookLM as an AI research and thinking tool built around user-provided sources.

This category has expanded quickly. Browser-based assistants are no longer limited to answering questions. They can search, analyse files, generate documents, create images, write code, produce tables, and in some cases connect to external tools. OpenAI’s ChatGPT release notes show this direction clearly, with updates around connectors, custom connectors using MCP, and deeper integration with external systems.

There is also a desktop-based form of the interactive assistant. This is more powerful because it can work closer to the user’s actual environment. A desktop assistant can potentially see files, interact with applications, run commands and produce output directly on the machine.

Claude Cowork is an example of this direction. Anthropic describes it as an assistant that can work on the user’s computer, local files and applications to return a finished deliverable. Its documentation also highlights capabilities such as direct local file access, sub-agent coordination and generation of polished outputs such as spreadsheets, presentations and formatted documents.

This changes the nature of the assistant. It is no longer just answering a question. It is helping to perform work.

A third form is the terminal-based coding assistant. Claude Code is a good example. It is positioned as an agentic coding tool that lives in the terminal, understands a codebase, edits files, runs commands and helps developers ship faster.

This is a big shift from traditional code completion. The assistant is not just suggesting the next line of code. It can inspect the codebase, modify multiple files, run tests and participate in the development workflow.

Notably, many users may still think of these tools as chatbots. That is increasingly inaccurate. Once the assistant can access files, run commands or modify output, it becomes closer to an operator.

Autonomous, persistent assistants

The second variant is the autonomous, persistent assistant.

This is less common, but more interesting.

A persistent assistant does not exist only during a chat session. It runs continuously, usually on a user’s machine, a homelab, a VPS or a managed cloud workspace. It can respond to triggers, schedules, webhooks, messages, files, system alerts or other events.

This makes it more like a butler than a chatbot.

A chatbot waits for the user to ask. A butler prepares, monitors, reminds and coordinates.

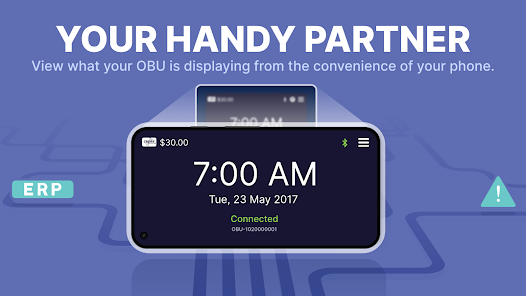

OpenClaw is a good example of this direction. It describes itself as a personal AI assistant that runs on your own devices and answers through the channels you already use. Its documentation describes a self-hosted gateway that can connect channels such as Discord, Google Chat, iMessage, Matrix, Microsoft Teams, Signal, Slack, Telegram, WhatsApp and others to AI agents.

This is an important design choice. The assistant is not locked inside a single browser tab. It can sit inside the communication channels where the user already works.

Hermes Agent from Nous Research is another example. It is described as an open-source agent that can live on a server, remember what it learns, build skills from experience and become more capable the longer it runs. Its GitHub repository positions it as a self-improving AI agent with a built-in learning loop.

These tools point to an important shift. The assistant is no longer tied to a browser session. It can live closer to the user’s actual workflow: in chat apps, terminals, servers, automation tools and connected systems.

For example, a persistent assistant could monitor incoming email, summarise important threads, draft replies, detect calendar conflicts, prepare weekly briefings, track changes to a website, create project tasks, or notify the user when something important happens.

This is where AI assistants start to overlap with workflow automation.

The difference is that traditional automation is usually rule-based. If this happens, then do that. A persistent AI assistant can potentially interpret context, make judgement calls, summarise ambiguous information and decide what action is needed.

This is also where enterprise adoption becomes more complicated. Assistants such as OpenClaw and Hermes are interesting because they suggest a future where users or organisations may run their own AI assistants on infrastructure they control, rather than relying only on SaaS-based assistants. That may help with data control, customisation and integration. It also creates new responsibilities around security, monitoring, cost and governance.

The persistent assistant is therefore not just a more convenient chatbot. It is a new operating model: an always-available agent that can remember, monitor, act and coordinate across systems.

From conversation to delegation

The important shift is from conversation to delegation.

In the old model, the user asks a question and receives an answer.

In the new model, the user gives a goal and the assistant works towards an outcome.

For example:

| Conversation | Delegation |

|---|---|

| Summarise this article. | Monitor this topic and brief me every Monday. |

| Explain this code. | Fix the bug, run the tests and prepare the pull request. |

| Help me draft an email. | Watch for replies, extract action items and update my task list. |

| Analyse this document. | Compare this document against the latest policy and flag changes. |

This is why the word “agent” is now used so often. The assistant is no longer only generating text. It is increasingly expected to plan, use tools and act.

The rise of Model Context Protocol is part of this shift. MCP provides a standard way for AI applications to connect to external data sources, tools and workflows. In practical terms, this makes it easier for assistants to move beyond static chat and interact with real systems.

The trust problem

The more capable the assistant becomes, the more important trust becomes.

For a simple chatbot, the main concern is whether the answer is correct. For an agentic assistant, the concern is broader. Did it edit the right file? Did it send the right message? Did it expose confidential information? Did it use an outdated source? Did it complete the task correctly? Can the action be reversed?

This is especially important for desktop-based and persistent assistants.

When an assistant can access the filesystem, run shell commands or connect to enterprise applications, the risk is no longer just hallucination. The risk is action.

This means permission boundaries matter. Audit logs matter. Human approval matters. Reversibility matters. Data governance matters.

The more useful an AI assistant becomes, the more dangerous it is to treat it as “just a chatbot”.

Implications for users

For individual users, the skill required is also changing.

Prompting is still useful, but it is not enough. Users need to learn how to delegate work clearly, review outputs critically, and decide which tasks should or should not be given to an assistant.

This is especially true for coding agents. The assistant may be able to write code quickly, but the human still needs to understand architecture, security, maintainability and correctness. Otherwise, the result may look complete but contain hidden problems.

The same applies to writing, research and administration. AI can produce output quickly, but the user remains responsible for judgement.

Implications for organisations

For organisations, AI assistants should not be treated as isolated productivity tools.

They are becoming part of the enterprise architecture.

The key questions are not just which model is best. Organisations also need to ask:

- What data can the assistant access?

- Can it access outdated or sensitive information?

- Can it take write actions?

- Which actions require approval?

- Is there an audit trail?

- Can users see what sources were used?

- Can the assistant be restricted by role, department or project?

- What happens when it fails halfway?

These are governance questions, not just technology questions.

This is particularly important because assistants are increasingly connected to systems of record: email, calendar, documents, CRM, project management tools, source code repositories and internal knowledge bases.

OpenAI’s ChatGPT Enterprise and Edu release notes show this direction in enterprise environments, with workspace agents that can support repeatable tasks and business workflows across connected apps. This is not just about productivity. It is about how work is coordinated.

Conclusion

In May 2026, AI assistants are no longer just chatbots.

They are splitting into two broad forms. The first is interactive and session-based. These assistants help users write, think, code, research and operate tools during an active session. The second is autonomous and persistent. These assistants run across time, respond to triggers, connect to systems and behave more like digital butlers.

The boundary between the two will continue to blur.

A browser-based assistant may gain more integrations. A coding assistant may become more autonomous. A desktop assistant may operate more applications. A server-based assistant may become a personalised workflow engine.

The key question is therefore changing.

It is no longer just: “What can the AI answer?”. It is increasingly: “What should the AI be allowed to do?”