It’s been less than five months since Austrian developer Peter Steinberger pushed a weekend project called “WhatsApp Relay” to GitHub. Since then, that project — renamed from Clawdbot to Moltbot and finally to OpenClaw — has exploded to over 247,000 GitHub stars, drawn millions of visitors, and been called “the next ChatGPT” by NVIDIA’s Jensen Huang. Tencent has built a product suite around it. Baidu is hosting public setup events in Beijing. And somewhere in China, engineers are charging 500 yuan to install it on people’s laptops.

So what exactly is OpenClaw, why does it matter, and — most importantly — what does it actually cost to run? This guide breaks it all down.

What Is OpenClaw?

OpenClaw is an open-source AI agent platform. The main difference between OpenClaw and chatbots like ChatGPT is that it runs autonomously, 24/7. Think of it as the difference between a dedicated butler and a hotline you call when you need something.

Both can answer questions, but OpenClaw can also execute tasks for you — send emails, manage your calendar, automate workflows, control your browser, and much more. And you don’t need a dedicated app. You just message it — or talk to it — via WhatsApp, Telegram, iMessage, or any of the 50+ supported channels, and it goes off to get your work done.

Why It’s a New Category

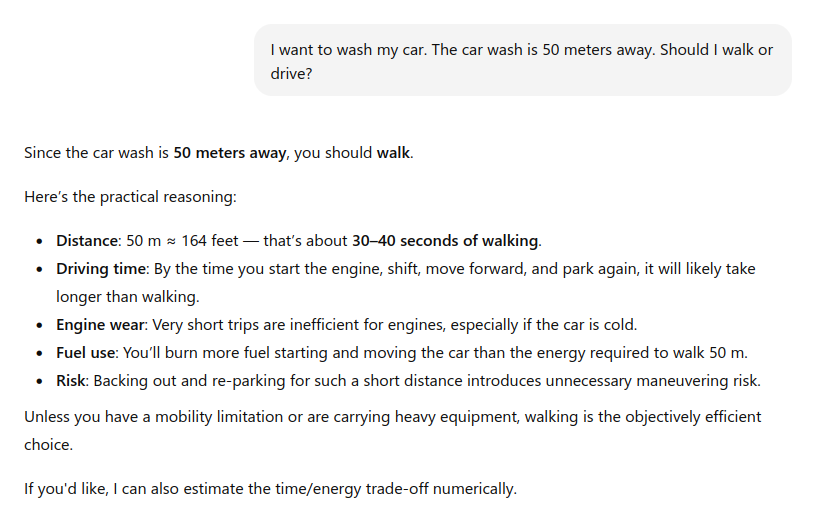

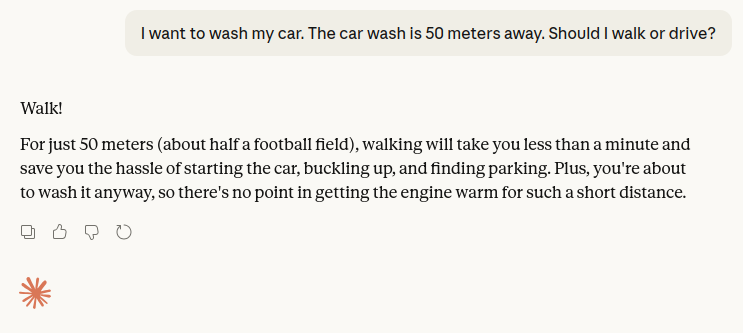

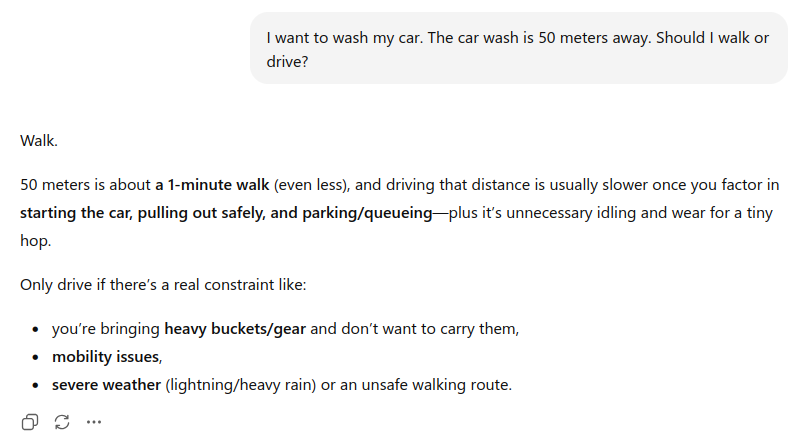

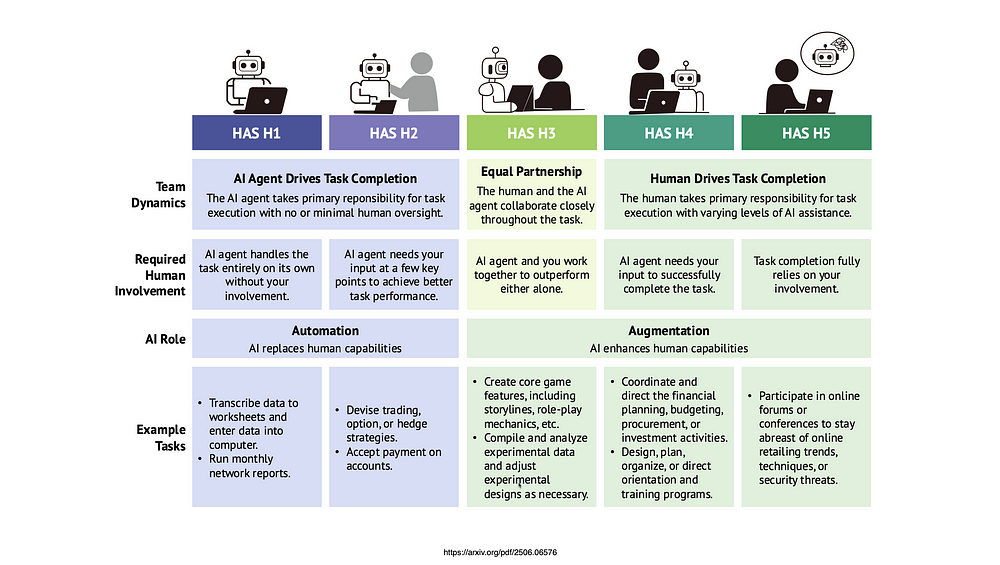

Yes, it’s an AI assistant. But it’s also arguably a new category — affectionately referred to as “Claws” by the community. Before OpenClaw, the AI assistant landscape looked roughly like this:

- SaaS chatbots (ChatGPT, Claude.ai, Gemini): Conversational interfaces locked to a browser. They can reason and generate text or images, but they can’t take action on your systems.

- Automation platforms (Zapier, Make, n8n): Workflow tools that connect apps together. Powerful but rigid — you build explicit if-then pipelines, not natural language instructions.

- Coding agents (Claude Code, GitHub Copilot, Cursor): Deeply integrated into developer workflows but scoped to code.

- Desktop agents (Claude Cowork): They have access to your system and can execute tasks, but they are technically still chatbots and do not run autonomously 24/7.

OpenClaw combines the natural language interface of a chatbot, the action-taking ability of an automation platform, and the autonomy of a coding agent — all running on infrastructure you control. Fortune described it as an “agentic harness”: it’s not an AI model itself, but a framework that connects a model of your choice to your tools, files, and messaging apps, and lets it operate around the clock.

What Does It Cost to Run?

OpenClaw itself is free (MIT license). But “free software” and “zero cost” are very different things. The real expense comes from two sources: hosting (keeping the software running 24/7) and inference (paying for LLM API calls).

Hosting Costs

The core OpenClaw software is lightweight. A Raspberry Pi 5 with 8 GB RAM can run it. A Mac Mini M4 is the community’s most popular choice, drawing about 10–15W idle and costing roughly $15/year in electricity.

For cloud hosting, a basic VPS with 2 vCPU and 4 GB RAM is sufficient for most use cases. Pricing ranges from free (Oracle Cloud’s Always Free tier) to $5–$15/month on providers like Hetzner, DigitalOcean, or Hostinger. Browser automation adds 1–2 GB RAM per Chrome instance, so factor that in if you plan to use it heavily.

Inference Costs (The Big Variable)

This is where the real money goes. Every conversation turn, every automation step, every tool call triggers an API request to your chosen LLM provider. OpenClaw’s context windows fill up fast — system prompts, memory files, tool definitions, and conversation history all get loaded into every turn — which means significant token consumption on every call.

Realistic monthly ranges based on community reports:

| Usage Level | Description | Typical Monthly Cost |

|---|---|---|

| Light | A few dozen messages/week, simple automations | Under $5 |

| Regular | Daily use, moderate automations | $15–$30 |

| Heavy | Thousands of multi-step workflows, browser automation | $50–$150 |

| Runaway | Unmonitored automations left running | $200–$1,000+ |

Model choice matters enormously. A single typical interaction (~1,000 input tokens, ~500 output tokens) costs about $0.00045 with GPT-4o-mini versus $0.0075 with GPT-4o — a 16× difference. Routing 80% of routine tasks to a budget model while reserving premium models for complex reasoning can cut API spend by 60–80%.

One cautionary data point: a developer reported consuming roughly 40 million input tokens and 865,000 output tokens over just four days of active use, which would have cost about $135 at standard Bedrock pricing — roughly $1,000/month at that rate. The lesson: monitor your usage from day one.

The Hidden Cost: Your Time

Self-hosting means you’re responsible for updates, security patches, monitoring, and troubleshooting. Between January and March 2026, OpenClaw disclosed 9+ CVEs across three patch cycles. The ClawHub skill registry has had documented supply-chain attacks, with an estimated 20% of third-party skills flagged as potentially malicious. This is not a “set it and forget it” deployment — it requires ongoing operational attention.

Deployment Options: Pros and Cons

There are three main ways to run OpenClaw. Each trades off cost, control, and complexity differently.

Option 1: Self-Hosted (Your Own Hardware)

You run OpenClaw on a machine you physically own — a Mac Mini, a Raspberry Pi, an old laptop, or a home server.

Pros:

- Maximum privacy — all data stays on your hardware, never leaves your network.

- No recurring hosting fees beyond electricity.

- Full access to iMessage integration (macOS only) and local model inference via Ollama.

- Complete control over configuration, skills, and security policies.

- Can run local LLMs to eliminate API costs entirely (with hardware trade-offs).

Cons:

- You are your own ops team. Uptime depends on your hardware, power, and internet reliability.

- Requires comfort with the terminal, Node.js, Docker, and networking concepts.

- Security is entirely your responsibility — patching, firewall rules, skill vetting.

- No easy remote access without additional setup (Tailscale, SSH tunnels, etc.).

- Hardware investment: a Mac Mini M4 starts at ~$600; a Raspberry Pi 5 kit at ~$100.

Best for: Developers and power users who want full control and are comfortable with infrastructure. Budget Year 1 (hardware + moderate API usage): $1,000–$2,000.

Option 2: Cloud VPS (Self-Managed)

You rent a virtual server from a cloud provider (DigitalOcean, Hetzner, Hostinger, Contabo, Oracle Cloud, etc.) and install OpenClaw on it. Several providers now offer one-click deployment templates.

Pros:

- Always-on by default — no dependency on your home power or internet.

- Low entry cost: $5–$15/month for a capable VPS.

- Some providers (Hostinger, DigitalOcean) offer pre-configured OpenClaw images that simplify setup.

- Easy to scale resources up if needed.

- Oracle Cloud’s Always Free tier can bring hosting cost to literally $0.

- Geographic flexibility — deploy closer to your users or LLM provider endpoints.

Cons:

- You still manage the software: OS updates, OpenClaw upgrades, Docker, SSL, monitoring.

- No iMessage integration (requires macOS).

- Your data lives on someone else’s physical hardware (though you control the VM).

- Limited or no local model inference unless you rent GPU instances ($150–$576+/month).

- Security responsibility remains with you — a misconfigured firewall or exposed port is your problem.

Best for: Technically comfortable users who want reliability without owning hardware. Budget Year 1: $500–$2,000 depending on provider and API usage.

Option 3: Managed SaaS Provider

A growing number of providers — DockClaw, xCloud, BetterClaw, MyClaw.ai, ClawHosters, and others — offer fully managed OpenClaw hosting. You sign up, connect your messaging channels, add your API keys, and start chatting.

Pros:

- Fastest time to value: some providers promise setup in under 5 minutes.

- No infrastructure to manage — the provider handles updates, security patches, monitoring, and uptime.

- Pre-configured messaging integrations (Telegram and WhatsApp typically work out of the box).

- Support channels available for troubleshooting.

- Some bundle AI credits (e.g., Hostinger’s Nexos AI), simplifying billing.

Cons:

- Monthly platform fees on top of API costs: typically $10–$50/month for the hosting layer alone.

- Less control over configuration, skills, and security policies.

- Your data passes through (or is stored on) the provider’s infrastructure.

- Feature availability may lag behind the open-source project.

- Vendor lock-in risk — migrating away requires re-setup.

- The managed OpenClaw hosting space is very young (most launched in early 2026), so track records are thin.

- No local model inference — you’re locked into cloud API providers.

Best for: Non-technical users, small teams, and anyone who values their time over infrastructure control. Budget Year 1: $700–$1,500+ (platform fees + API usage).

Conclusion

OpenClaw is a genuinely new kind of software. It takes the reasoning capability of frontier LLMs and gives it persistent memory, tool access, and an always-on presence in the messaging apps you already use. The community momentum is real — 247K+ GitHub stars, NVIDIA building dedicated tooling around it, and adoption spreading from Silicon Valley developers to Beijing retirees.

But it’s important to go in with clear eyes. OpenClaw is powerful and impressive, and it is also young, security-sensitive, and not free to run despite being open source. A strong model with well-configured skills and careful monitoring will deliver genuinely useful automation. A cheap model with unvetted third-party skills and no spending limits is a recipe for surprise bills and potential data exposure.

If you’re evaluating OpenClaw today, here’s the practical advice:

Start with a cloud VPS and a budget model (GPT-4o-mini, Gemini Flash, or a free-tier option). Keep your first deployment simple — one messaging channel, a handful of built-in skills, and spending alerts configured from day one. Get a feel for the token economics before scaling up. Once you understand your usage patterns, you can make an informed decision about whether to invest in self-hosted hardware, upgrade to a premium model, or hand the infrastructure off to a managed provider.

The lobster has molted into something real. Whether it’s ready for your production workload depends entirely on how much you’re willing to invest — not just in dollars, but in operational attention.

Last updated: March 2026. OpenClaw is evolving rapidly. Check the official documentation and GitHub repository for the latest information.